Essentials of an MLOps platform Part 2: Infrastructure

Machine learning has become an essential tool for businesses to optimize and automate processes. The term MLOps has already been around for some time now, but that does not mean that it is easy to implement. I will not argue whether machine learning is the solution to your problems. However, if it seems useful to have a proper operational process for your ML workloads, or if you are interested in the topic, please read on!

This blog will cover the implementation of the underlying infrastructure needed to create an MLOps platform using cloud agnostic tooling on GKE (Google Kubernetes Engine, from now on called Kubernetes/K8s). This blog is part of a series that ties together architecture (the-essentials-of-an-mlops-architecture), infrastructure (this blog) and tooling (future blog, will add link here later).

The blog will contain mostly two parts:

- Functional description of all the moving parts

- Code walk though of the implementation

Any code references can be found in the public Github repository: https://github.com/BigDataRepublic/mlops-platform-public

Dissecting the platform

As mentioned, this blog will describe the infrastructure required for a cloud agnostic MLOps platform on GKE. There are some important things here that need further elaboration.

What is the infrastructure, and what is the MLOps platform?

The MLOps platform is what will run on the infrastructure. This blog focuses on provisioning everything that is needed to make this possible. That means three things:

- Create all required GCP components

- Possible external settings such as CI/CD tokens, DNS records

- Bootstrapping the K8s cluster for GitOps

What does cloud agnostic mean in this sense?

The infrastructure is definitely not cloud agnostic. Although the concepts are generic, the infrastructure implementation is clearly GCP (Google Cloud Platform) specific. The tooling deployed on the K8s will be cloud agnostic and we will not depend on Google components for the MLOps part, but this will be described in the next blog post.

Understanding the requirements

The most important features of the core platform are related to automation and security:

- Platform is only accessible through Identity-Aware Proxy (IAP)

- ArgoCD is used as GitOps tool for all K8s workloads

- Automate all steps that can be automated

This essentially means that there are some tough cloud provisioning requirements in terms of automation and security. In order to keep track of all configurations and components created on GCP, we will use Terraform for cloud provisioning.

Terraform will only be responsible for GCP provisioning, because we want ArgoCD to be responsible for Kubernetes and it is best not to mix up these responsibilities. In order to do the minimum bootstrapping of the Kubernetes cluster we will use some plain CLI commands to get ArgoCD up and running.

Provisioning with Terraform: Description of core components

Terraform itself is one of the current popular Infrastructure as Code tools available. I will not dive into Terraform, but you can find lots of great information sources online. For the largest part we will depend on terraform-google-modules that provisioning secure instances of:

- The GKE cluster: Google/beta-private-cluster

- SSH access through: Google/bastion-host

- Basic networking: Google/vpn, Google/cloud-nat

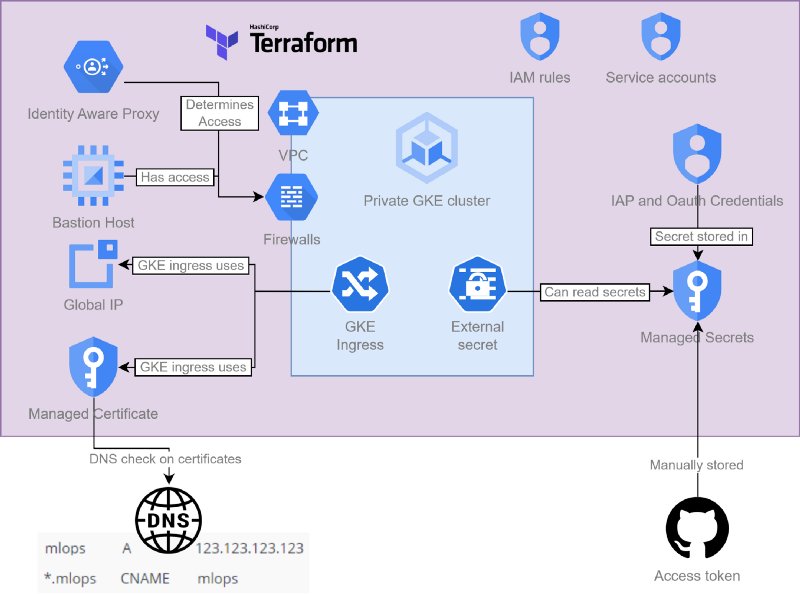

Putting these together we get something like shown below:

The most important connections between the different subcomponents provisioned by Terraform

Besides these modules Terraform will also provision other specific dependencies that the GKE cluster will use to configure the ingress:

- Public IP address and managed certificate

- Secrets and Credentials stored in Google Secret Manager

- Necessary service accounts and rules to allow the previous two

Configuring Kubernetes: How does that work?

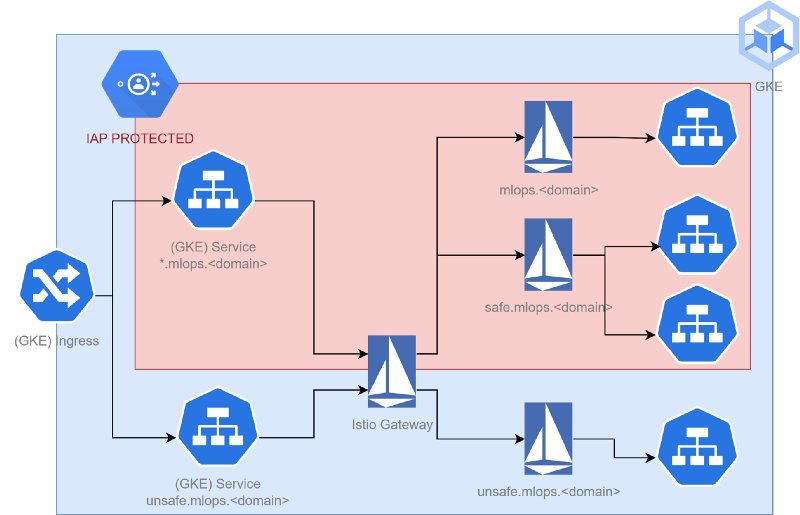

Now that the necessary external components are created, the cluster needs to be made accessible. Because we are using GKE and want it to be secured through IAP, it is necessary to make use of the GKE specific Ingresses available. A way to enable this in a nice way is to use Istio and implement it as suggested by the GCP documentation. I will not go into Istio in too much detail, just know that it allows you to do routing as shown in the picture below:

IAP protection used to secure specific Istio paths

The major reason to use Istio is that it is not possible to have multiple Ingress objects to a single GKE Ingress controller (like with an Nginx controller). That would mean that for each added subdomain or route we would need to update the generic Ingress object, which is very troublesome later on when using GitOps to manage the cluster and routing. With Istio we can create multiple VirtualService objects to get this result.

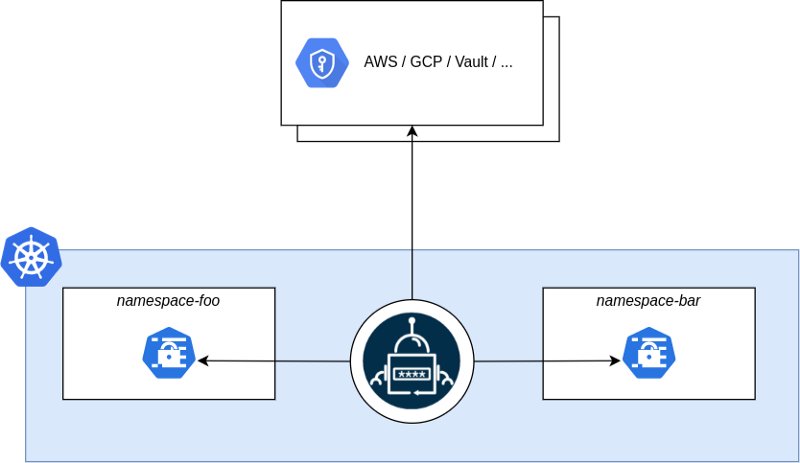

We will use external secrets to import GCP secrets onto K8s

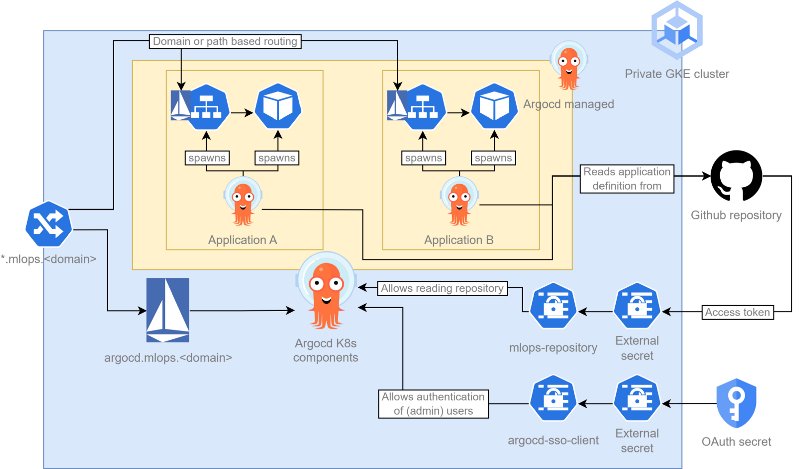

Besides Istio, we will also deploy K8s External Secrets that will replicate GCP secrets as Kubernetes Secrets (using a service account created by Terraform). With these other components, it is finally possible to install ArgoCD as GitOps tool. ArgoCD will read from a separate repository to deploy workloads on the Kubernetes cluster (using credentials provided by External secrets). Functionally how this works can be seen in the picture below.

The next blogpost will go deeper into the ArgoCD setup, I will put a reference here when it is available.

ArgoCD has access to the necessary secrets so that it can poll an external repository and deploy all Kubernetes workloads that it is responsible for

How to implement an MLOps platform

To deploy this project, it is assumed that you have created a Google Cloud Project. Note down the name, as it is needed to configure the Terraform code. At the same time get yourself a terminal and install terraform, gcloud and the kubectl command line interfaces. The following should all successfully give some output:

terraform version

gcloud version

kubectl version

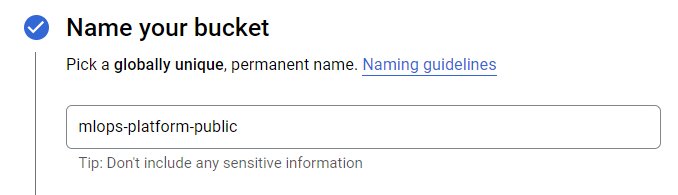

If not, follow any of the following to install the necessary cli: Install terraform, Install gcloud or Install kubectl. It is good practice to put your Terraform state file in Object Cloud storage. By default it uses a GCP bucket mlops-platform-public created as follows:

Bucket created for terraform state, other options do not matter too much but versioning might be a good idea

From this point on we will use the code from here: BigDataRepublic/mlops-platform-public. The project is split up in two major parts. The gke-terraform folder that is used to create the underlying infrastructure and the argocd-provisioning folder that is used to create the required Kubernetes components.

The rest of the blog will provide some extra information about the code and how it fits together. Alternatively you can directly go and clone the repository and follow the README.md instead.

Deploying the infrastructure with Terraform

Have a look at the code snippets in gke-terraform. All settings are set through variables. Most of them can be kept the same, but you need to set the following:

Make sure to configure your Terraform backend to use the right bucket:

If you are configuring a new GCP project, you probably have not configured a IAP brand yet. As mentioned in the documentation iap_brand patching and deleting is not supported. So only for the first run the following is valid:

Have a look at the other components that get created. These include the Kubernetes cluster, networking, IP address and certificates, but also managed secrets together with necessary service accounts that let specific Kubernetes components read the secrets automatically. In the below Gists, the secrets and the load balancer requirements are created:

Sadly GCP does not support wildcard certificates, new subdomains need to be added to this certificate.

Now run the following commands to create the infrastructure (replace project-id with yours).

# Login with gcloud

gcloud auth login

gcloud config set project <gke-project-id>

cd gke-terraform

terraform init

# First configure the project services, otherwise terraform will fail to plan

terraform apply --target module.enabled_google_apis

terraform apply

After running these steps the cluster is reachable through the Bastion Host. Some convenience Terraform outputs are available to create a SSH tunnel as follows:

# First configure the kubeconfig entry for the private cluster:

$(terraform output get_credentials_command | tr -d '"')

# Then open a tunnel to the bastion host:

$(terraform output bastion_ssh_command | tr -d '"')

> Last login: Fri Nov 01 00:00:00 2022 from 123.123.123.123

> <user>@private-cluster-iap-bastion-bastion:~$

Now you can talk to the Kubernetes cluster through this tunnel

$ HTTPS_PROXY=localhost:8888 kubectl get namespace -A

> NAME STATUS AGE

> default Active 3h59m

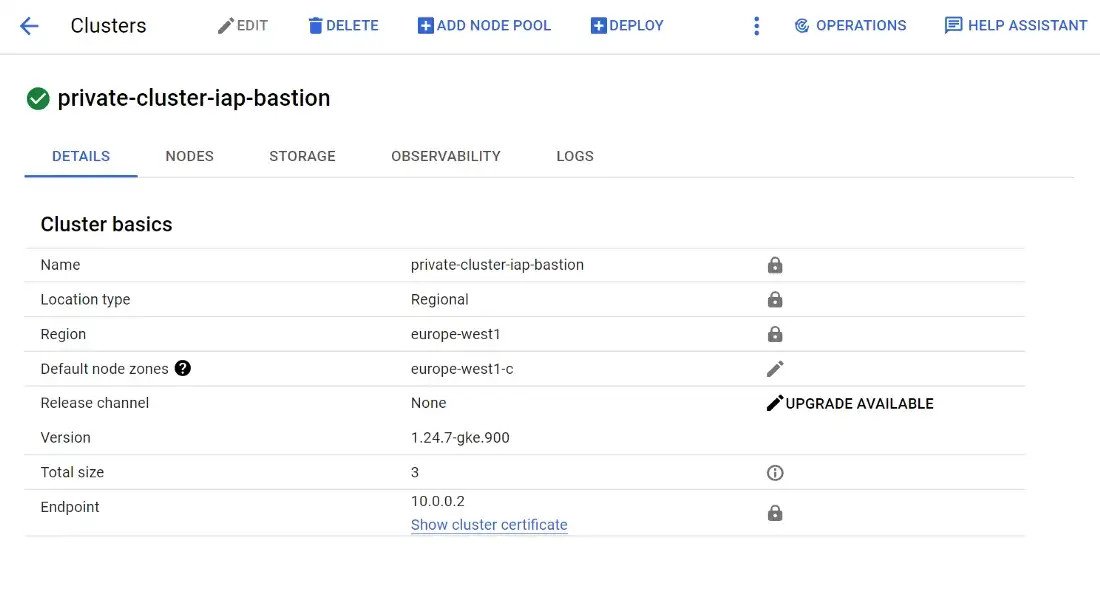

If you go the GCP console you will see the cluster:

The only endpoint is a private IP address, accessible through Bastion Host

Manual configuration

We need to do three things manually:

- Create DNS records for your (sub)domain

- Add a managed secret for access to the ArgoCD repository

- Add a managed secret for OAuth authentication to ArgoCD

How to configure DNS records depends on your own setup, it could even be done with a managed cloud DNS service from GCP. In order to route traffic via a DNS name, you need to configure:

- An A record for

mlops.<your-domain>pointing to the public IP address - A CNAME record for

*.mlops.<your-domain>pointing to the A record

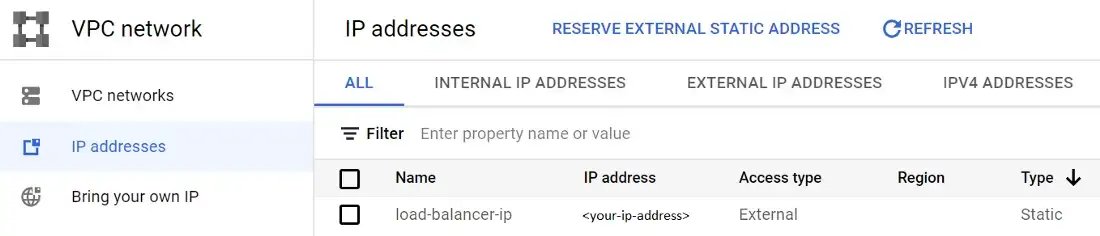

The public IP address can be obtained by running terraform output public_ip_address or from the GUI:

In your browser the IP address will be set to a concrete address

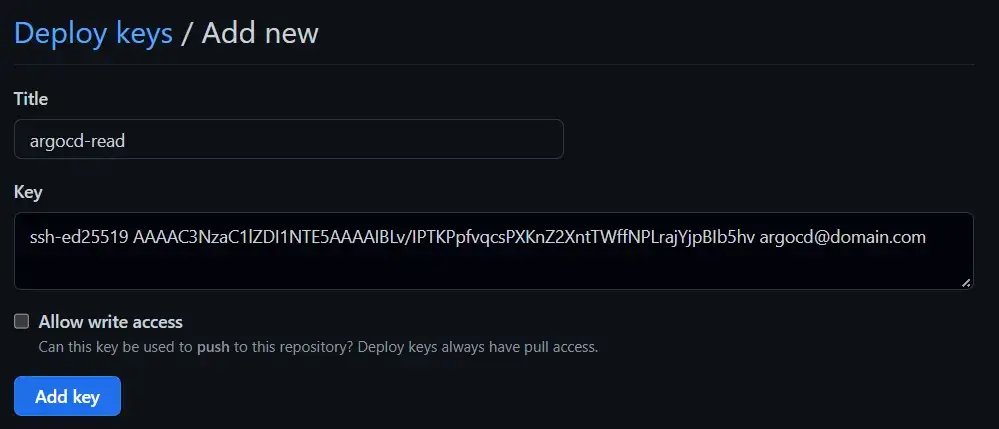

Now we will create a deployment-key for the repository where ArgoCD can obtain the K8s files. First create a keypair as described on any help page. Go to your repository keys (you need admin rights for this): https://github.com/<your.github.repo>/settings/keys.

Change the key to whatever value you have generated

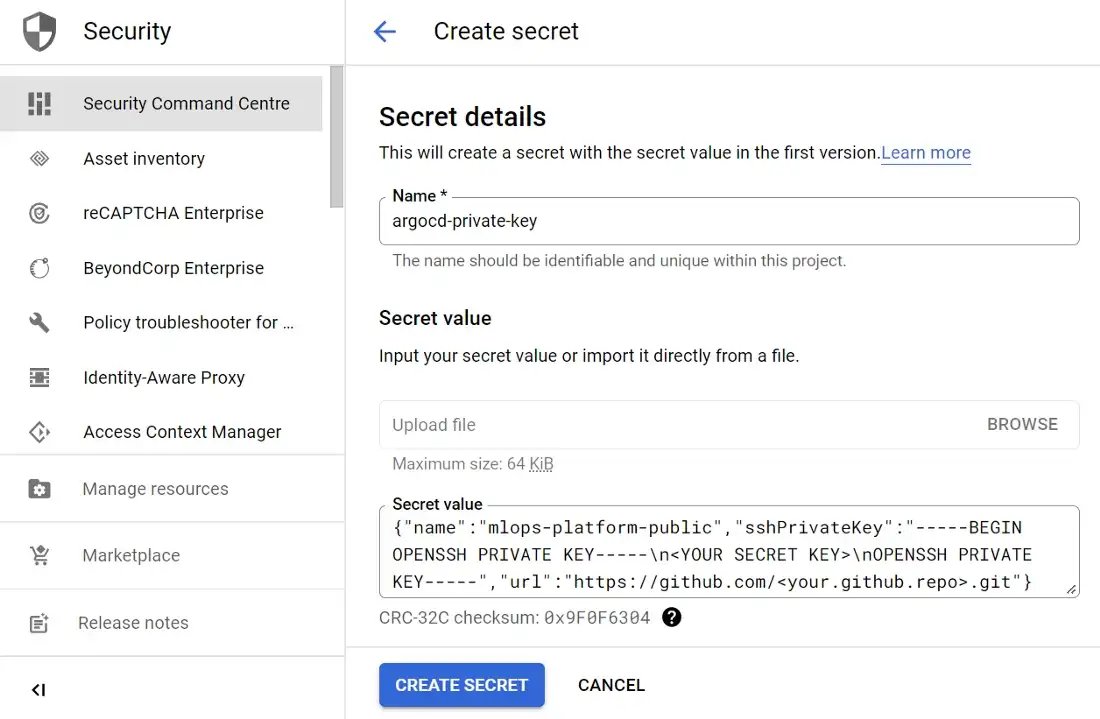

Then you need to upload the private key as managed secret so that K8s can access it later on as an external secret:

Change the values but make sure that all newlines are in \n formatted

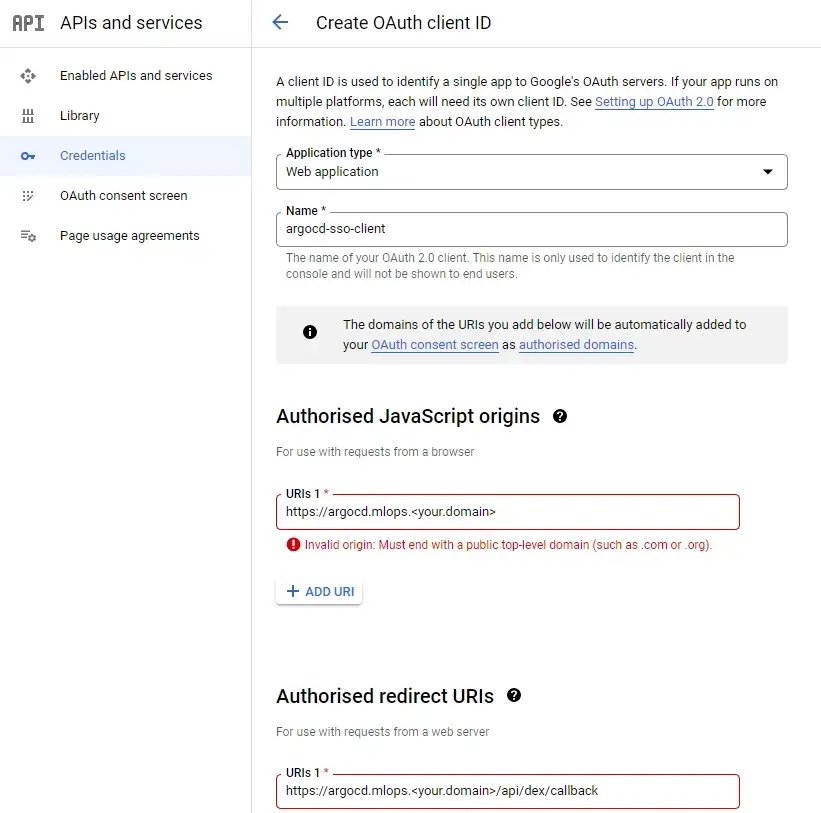

Next up is to create an OAuth credential. This credential is going to be used by ArgoCD to authenticate users through Google Oauth:

The warnings are only there because <your.domain> is not valid, change according to your situation

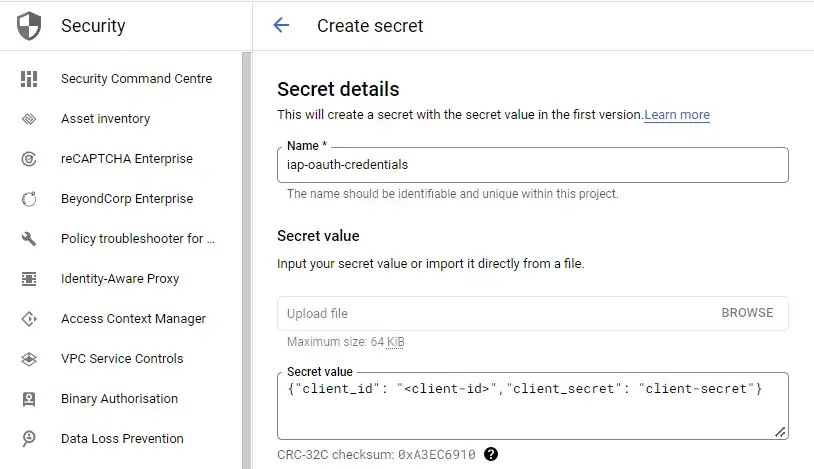

And then upload the resulting client and secret as a managed secret:

Deploying the Kubernetes components

Now that the necessary manual steps have been executed. The variables set earlier need to be updated in the K8s yaml files. Make sure to replace all the references of <gke-project-id>, <your.domain>, <your.github.repo> in the argocd-provisioning folder. When this has been fixed, make sure that the SSH tunnel to the cluster is running, and then execute the following commands:

cd argocd-provisioning

# Provision argocd from its own folder

./create_resources.sh

# Optionally destroy again with

./delete_resources.sh

By executing the create_resources.sh script the following Helm charts are implemented together with the creation of external secrets and setup of Istio:

The GKE specific configurations can be seen below, notice how IAP is configured in the BackendConfig and how the Ingress uses a pre-shared-cert and global-static-ip-name to allow the automatic linking of GCP components:

These configs tell GKE how to connect the Ingress when created (of gce class).

The Backend/Frontend Configs are used in the Service and Ingress:

We allow TLS termination on the ingress and forward all on port 80 to the Istio Gateway.

If there are any issues during the deployment. Check whether the references have been set correctly and whether the other components are functioning (especially ClusterExternalSecret).

Review running components

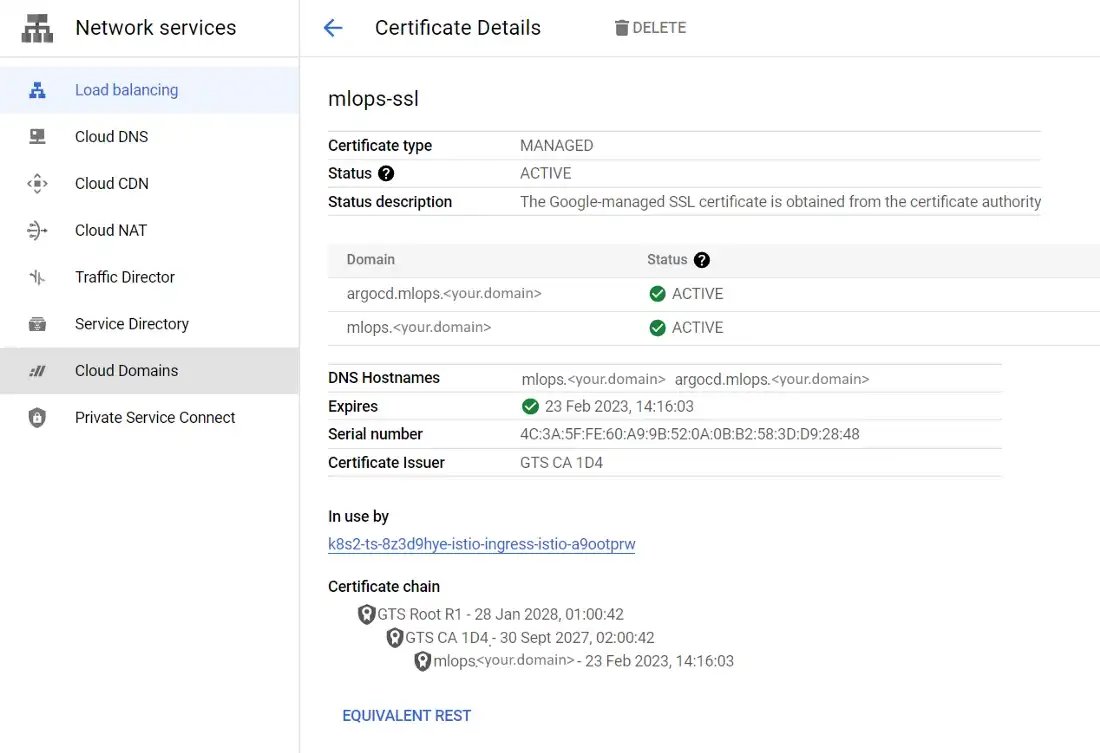

Provisioning can take some time, especially certificate provisioning can be slow (up to hours of wait time) depending on when you add the necessary DNS records. Lets check the GCP console to see whether everything is provisioned as desired. After the Ingress been created, GCP has everything it needs to complete the verification of the certificate. Certificates in GCP are not trivial to find, but if you first go to LoadBalancing, click on the load balancing components view and you can find the certificates under sslCertificates:

If the DNS is configured correctly, GCP will provision the certificates for you

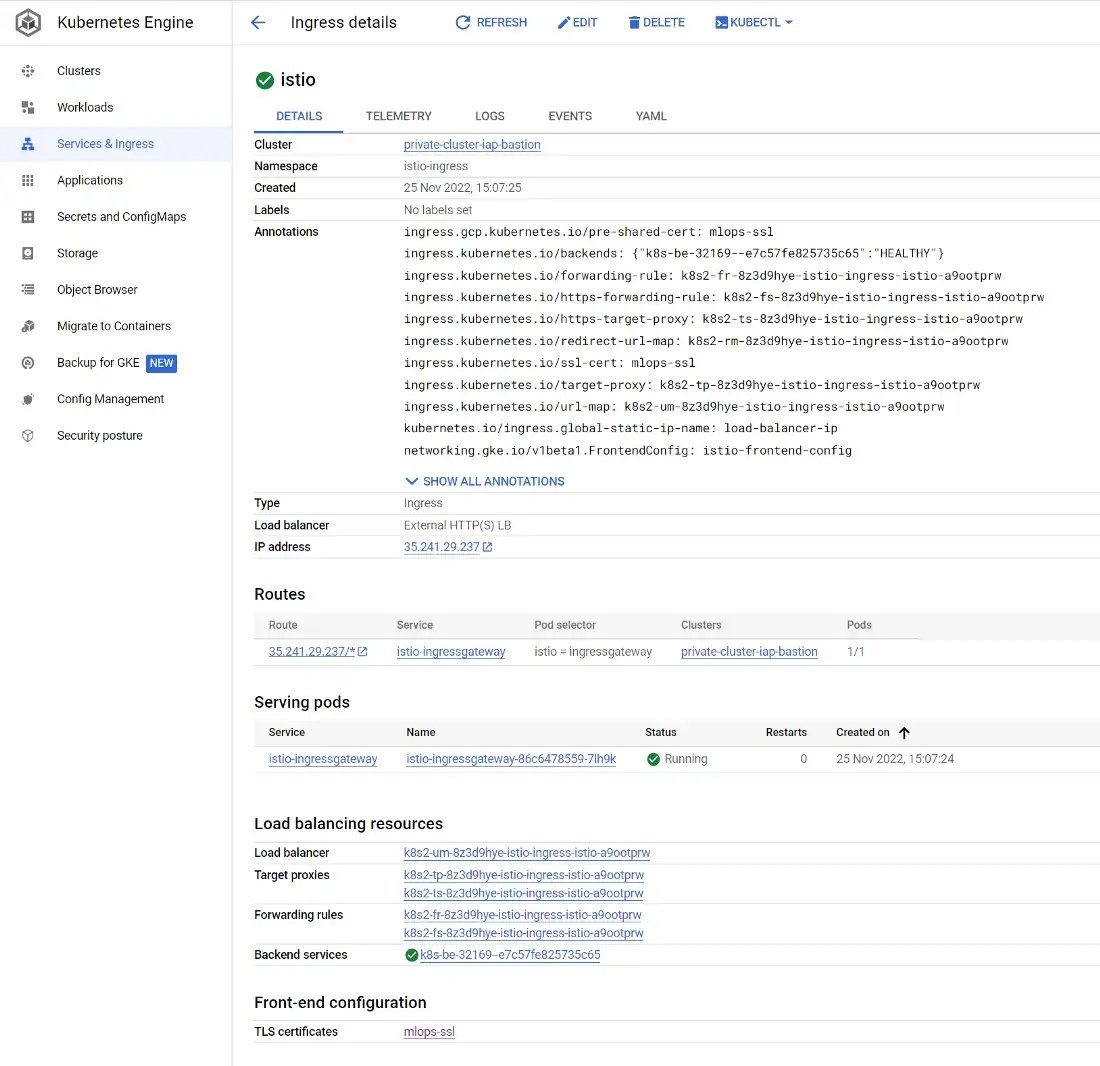

With the working certificate, the GKE Ingress can also be provisioned. Under the Kubernetes Engine Ingresses tab you can find the one and only Ingress created that secures our cluster:

Note the links to the TLS certificate and the public IP address

Now it is finally time to connect to your ArgoCD cluster! The URL obviously depends on your domain name: https://argocd.mlops.<domain-name>/. It will ask you to login for the Cloud IAP protected Application with your Google account. Afterwards you can use Google OAuth to login. More automation could be used to read the OAuth token from IAP, but that is not included in this tutorial.

Use the login via Google that uses the OAuth application configured on GCP

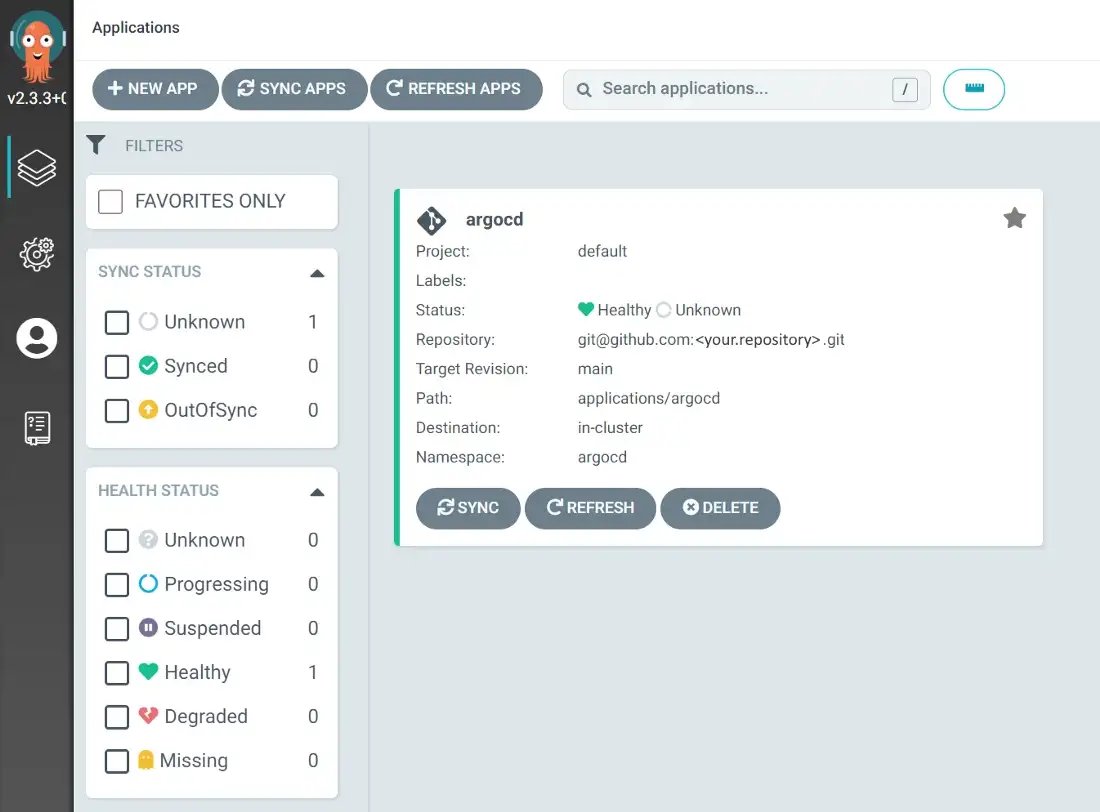

Then you will get access to ArgoCD itself:

ArgoCD ready to deploy more tools!

Conclusion

With this you should have successfully deployed the GitOps setup required to run our MLOps workloads on GCP. Recap the major steps:

- Deploy the infrastructure with Terraform

- Manually configure necessary DNS record and external secrets

- Configure Kubernetes through the Bastion Host

Now that the underlying platform is ready to be used, stay tuned until our next blogpost that will go deeper into the ML tooling itself!